If you're running Meta ads in 2025, you've probably noticed something: the old "spray and pray" approach to creative testing is dead.

Post-Andromeda, Meta's algorithm rewards meaningful creative variation, not 47 versions of the same ad with slightly different text overlays.

Nick Theriot, who manages $1M+/month across 10+ ad accounts, recently broke down his exact system for scaling winners. The framework is elegant: Iterations (change ONE thing like location or person) before Variations (change MULTIPLE things like format, hook pattern, or style).

The problem? Creating these variations traditionally requires new photoshoots ($500-$5,000 each), days or weeks of turnaround, and gambling budget on unproven concepts.

What if you could test 10 visual iterations in an afternoon, before committing to a single reshoot?

The Post-Andromeda Creative Testing Framework

Meta's Andromeda update fundamentally changed how the algorithm evaluates creative. When you upload ads that are too visually similar, Meta consolidates delivery to a single version (wasting your test budget), flags them as "creative similar" in Ads Manager, and limits reach because it sees redundant content.

Nick's rule of thumb: "If Meta can't tell them apart visually, neither can your audience."

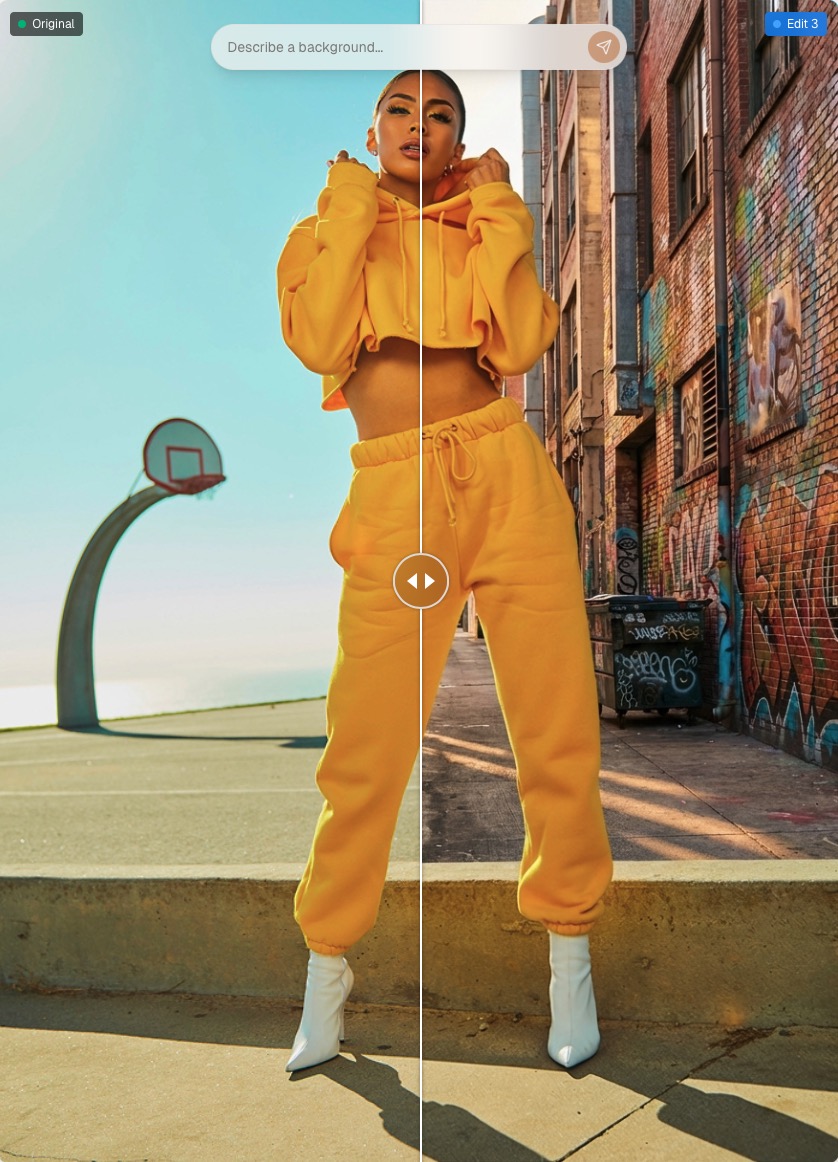

This means swapping a white-wall bathroom for a white-wall living room isn't different enough. But bathroom → car → poolside? That's three distinct visual contexts Meta will treat as separate creative.

Iteration Type #1: Location Swaps

The fastest way to breathe new life into a winning static image ad is changing the environment.

- The Traditional Way Book a new location, hire photographer, coordinate talent schedules, edit and retouch. Timeline: 1-2 weeks. Cost: $500-$2,000.

- The AI-Powered Way With StaticKit's background replacement, you can test location hypotheses before investing in production. Upload your winning creative, describe the new environment in natural language ("Replace background with a sunny outdoor café patio, European style, morning light"), and StaticKit automatically extracts your product/subject, generates the new environment, and adjusts lighting to match.

Pro Tip: Test Lighting Variations Too

The same location can feel completely different with alternate lighting. Golden hour creates warm, aspirational, lifestyle vibes, perfect for wellness, travel, and premium products. Bright studio lighting feels clean, trustworthy, and professional, ideal for SaaS, B2B, and health products. Neon/moody lighting is edgy, youthful, and bold, great for fashion, nightlife, and Gen Z brands.

StaticKit includes one-click lighting presets, so you can test whether your winning creative performs differently in "golden hour" vs. "studio" lighting, all without re-shooting.

Iteration Type #2: Subject Variations

Nick's second iteration lever is testing different people: "Say your winning ad uses a creator in their 20s. Test different ethnicities, different ages, different demographics. This lets you appeal to different subsets WITHIN your target market."

If your winning ad shows a 25-year-old using your product, you might be missing the 45-55 demographic who can't see themselves in the image, different cultural contexts that resonate with other segments, and gender variations that could unlock new audiences.

StaticKit's reference image feature lets you extract a subject from one image, apply your product/context to that subject, and maintain pose and composition while changing the person.

From Iterations to Variations: Changing Multiple Elements

Once you've exhausted single-variable iterations, Nick recommends moving to variations: changing multiple elements while keeping the core message.

- Lifestyle → Studio Change environment and lighting using background replacement plus lighting presets.

- Single product → Flatlay Adjust composition and context with reference image compositing.

- Portrait → Landscape Change aspect ratio and framing with smart resize (AI extends canvas intelligently).

- Polished → Raw/organic Shift overall aesthetic with lighting presets and natural language adjustments.

Smart Aspect Ratio Resizing for Placement Testing

Different Meta placements have different optimal ratios: Feed works best at 1:1 or 4:5, Stories/Reels need 9:16, and right column uses 1.91:1.

Instead of cropping (which loses content) or letterboxing (which looks lazy), StaticKit's smart resize intelligently extends your image to fit new aspect ratios.

The Hypothesis-Driven Testing Workflow

Nick emphasizes that every iteration needs a hypothesis: "I'm not just cranking out creative for the sake of it."

Before iterating, document your winner: visual elements (location, subject, lighting, composition), message (core desire, awareness level, target persona), and performance (CTR, conversion rate, ROAS, frequency before fatigue).

Then generate hypotheses like "Outdoor setting will feel more aspirational" (test: pool/patio background), "Older demographic needs to see themselves" (test: 45+ model reference), or "Stories placement is underperforming due to crop" (test: smart resize to 9:16).

Real Cost Comparison

For a realistic testing sprint with 8 iterations (4 locations, 2 demographics, 2 lighting styles):

- Traditional Production Location scout, photographer, talent, editing = $1,100-$2,300 over 7-10 days.

- AI-Powered Production StaticKit (free, open source) + Gemini API usage (~$0.50-$2.00) + 1-2 hours of your time = ~$2.00, same day.

The math is obvious. But more importantly: you can test hypotheses before committing production budget. If your "poolside location" iteration bombs, you've lost $0.25 in API costs, not $800 in location fees.

Getting Started: Your First Iteration Sprint

Install StaticKit (clone the repo, run npm install, then npm run dev). Grab a free Gemini API key from Google AI Studio and you're ready to go.

Gather your top 3 performing static image ads from the last 90 days. These are your iteration candidates.

Run a location sprint: for each winner, generate 3 location variations (current location as control, opposite environment like indoor→outdoor, and aspirational setting where your customer wants to be).

Test lighting variations on your best-performing location variant: original lighting, golden hour preset, and studio/clean preset.

Upload to Meta with proper naming conventions like [Winner_Name]_LOC_pool or [Winner_Name]_LIGHT_goldenhour. After 3-5 days of data, analyze which iteration types drive improvement, then double down.

Stop Guessing, Start Iterating

The brands winning on Meta in 2025 aren't spending more on creative. They're iterating smarter.

Nick Theriot's framework gives you the strategic foundation: iterations (one variable) before variations (multiple variables), visually distinct enough for Meta to differentiate, and every test tied to a hypothesis.

StaticKit gives you the execution speed: location swaps in seconds, lighting variations with one click, smart resizing for every placement, and zero watermarks with zero subscriptions.

The combination? A creative testing machine that lets you find winners faster and scale them further, without burning budget on production gambles.